The Funding Gap for Women Is an Algorithmic Governance Problem

- Isabel Velarde

- Apr 4

- 11 min read

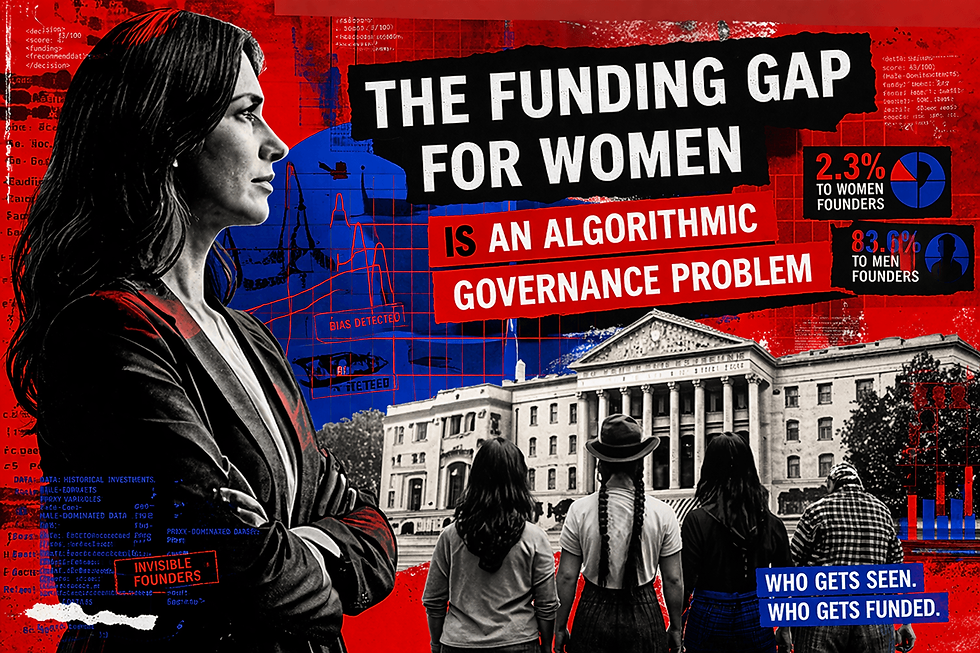

Someone on your investment committee approved a scoring tool that evaluates which founders are worth a conversation. Almost certainly, no one asked what population that tool was calibrated on, what proxies it uses for entrepreneurial potential, or which founder profiles it structurally cannot see. That is not a technical oversight. It is a governance failure. And it is producing, with remarkable consistency, the same result: female founding teams receiving 2.3 percent of the venture capital deployed globally in 2024, while all-male teams received 83.6 percent.

At current rates of improvement, gender parity in venture capital allocation arrives around 2065, according to projections cited by the World Economic Forum. This is not a projection about cultural attitudes. It is a projection about institutional systems that are making thousands of funding decisions per day using criteria no one examined, on behalf of institutions that have not asked what those criteria are.

The funding gap for women is not a pipeline problem. It is a governance problem. The pipeline is full. The evaluation system cannot see it.

The pipeline argument does not survive the data

The standard diagnosis holds that too few women are founding technology companies, or that those who do are founding the wrong kinds, or that the networks through which capital flows simply have not yet encountered enough qualified female founders. All of these explanations have the considerable advantage of suggesting that the problem will solve itself, given time and goodwill. None of them survives contact with the data.

In Latin America, where the Gender Equality in Technology Committee of the World Business Angels Investment Forum concentrates much of its work, women's total early-stage entrepreneurial activity rates in several countries approach or match those of men, according to the Global Entrepreneurship Monitor's 2024 Women's Entrepreneurship Report. The GEM documents that Latin America and the Caribbean record among the highest startup rates globally for women, driven by necessity-driven entrepreneurship in contexts where formal employment offers few alternatives. Women are not absent from the entrepreneurial economy. They are present in it at rates that match or exceed global averages. What they are absent from is the formal capital allocation system that would allow their ventures to scale, generate employment, and produce the track records that institutional investors recognize as fundable.

The IDB Lab's wX Insights 2024 report, drawn from surveys of nearly 1,700 women-led STEM companies and interviews with more than 600 equity funds across Latin America, found that 38 percent of women entrepreneurs cited lack of financing as their primary obstacle, with their main challenges including contacting investors, navigating negotiation, and dealing with biases in investment decision-making. According to IDB data, only 27 percent of women entrepreneurs in the region secure credit, and only 15 percent obtain venture capital funding. Latin America has a large and growing base of women-led micro-businesses, most of which have never been evaluated by an institutional financing system. Most never will be, because the systems designed to evaluate creditworthiness and investment potential were not designed for the economy they inhabit.

The algorithm did not decide women were less fundable. It learned from a history that had treated them that way. Then it automated that learning at scale.

How the mechanism works

The conventional account of algorithmic bias in financial decision-making treats it as a calibration error: the model was trained on insufficiently representative data, the outputs reflect that imbalance, better data will produce better outcomes. The account is not wrong so much as it is incomplete to the point of being misleading.

A study published in Science in 2019 by Obermeyer, Powers, Vogeli, and Mullainathan examined an algorithm used to manage care for millions of patients in the United States and found that it systematically underestimated the health needs of Black patients. Not because race was a variable in the model. Because historical cost had been used as a proxy for health need, and historical cost reflected decades of structural barriers that had prevented Black patients from accessing care at the same rate as white patients with equivalent conditions. The algorithm had not encoded discrimination. It had encoded the data that discrimination produced. But the effect is discrimination, and governance frameworks are responsible for effects, not intentions.

Research published in the Journal of Behavioral and Experimental Finance in 2025 by Liu and Liang at the University of Illinois demonstrated the same mechanism operating in credit scoring. Female borrowers consistently receive lower credit scores than men by approximately six to eight points, after controlling for payment history, amounts owed, and credit history length. Variables such as marital status, age, and credit limit function as proxies for gender within the models, allowing discriminatory pathways to persist while the model satisfies standard regulatory fairness metrics. Six to eight points sounds modest. Across multiple borrowing cycles, it produces higher interest rates, restricted credit limits, and reduced approval rates. Women's World Banking, in its research program on algorithmic bias initiated in 2020, documented the further complication that women are less likely to own a phone, less likely to own a smartphone, and less likely to access the internet, conditions under which any credit underwriting system that uses digital engagement as a proxy for creditworthiness will disadvantage women before any explicitly discriminatory variable is introduced.

Reuters reported in 2018 that Amazon had quietly discontinued an internal recruiting tool after discovering it had learned to penalize signals associated with female candidates, including attendance at women's colleges. The system had been trained on ten years of the company's own hiring data, and that data reflected a decade of systematically male-dominated hiring. Amazon had, in a meaningful sense, automated its own historical bias and then been surprised to find it running.

This is the pattern. It repeats across sectors, geographies, and institutional types with the kind of regularity that indicates structural cause rather than accidental error.

The structural cause in Latin America

Latin American women entrepreneurs disproportionately operate in the informal economy, carry caregiving responsibilities that interrupt formal employment trajectories, build professional networks in communities that algorithmic screening does not recognize as high-value signals, and address markets that training data, calibrated on investment outcomes in other contexts, does not associate with high-growth potential. The Economic Commission for Latin America and the Caribbean's Social Panorama 2024 documents that the labor force participation gap between men and women in the region exceeds twenty percentage points, driven by the concentration of unpaid care work on women. The International Labour Organization's 2025 Labor Panorama places informal employment at 46.7 percent of the working population in Latin America and the Caribbean, and at 56 percent among workers between fifteen and twenty-four years old.

These are not the conditions in which a scoring model calibrated on formal employment continuity, regularized income, and dense banking histories will produce gender-neutral results. They are the conditions in which such a model will produce gender-discriminatory results consistently and without anyone in the institutional chain having authorized the discrimination.

The ILIA 2025 report, produced by CEPAL and Chile's National Center for Artificial Intelligence, documents that Latin America accounts for 14 percent of global visits to AI solutions while investing only 1.12 percent of global AI investment despite representing 6.6 percent of global GDP. The region is a large and enthusiastic consumer of systems it had almost no role in designing. That matters for gender equity in capital allocation because the populations most likely to be misread by systems calibrated for other markets are precisely the populations that Latin American investment ecosystems most need to reach: the informal entrepreneur, the founder whose employment history reflects caregiving rather than disengagement, the woman building a business in a sector that global training data has historically underfunded.

The World Economic Forum's Global Gender Gap Report 2024 documents that closing gender gaps in economic participation will take over 134 years at current rates. A BCG analysis cited by the Forum finds that global GDP would rise between 3 and 6 percent annually if women entrepreneurs received the same investment as their male counterparts. The systems excluding women from capital access are also systems misallocating capital, directing resources away from opportunities that do not match the pattern their training data associates with success, while that pattern was itself produced by a history of exclusion. This is not a fairness argument dressed in economic language. It is an economic argument: the current system is destroying value by design.

Boards of directors approve budgets for algorithmic evaluation tools. What they almost never do is examine the criterion embedded in those tools and ask whether it was formed for the market where it is now being applied.

What governance frameworks must actually address

Boards of directors across the region approve budgets for algorithmic evaluation tools. They approve vendor relationships. They approve implementation timelines. What they almost never do is examine the criterion, the actual definition embedded in the model's architecture of what constitutes a fundable opportunity, a creditworthy borrower, an eligible beneficiary, and ask whether that definition was formed for conditions that obtain in the market where it is now being applied. The criterion arrives pre-packaged, calibrated elsewhere, and once installed it operates continuously, making thousands of evaluations per day, without any deliberative body having voted on the values it encodes or the populations it cannot see.

This is the governance failure. Not the bias. The delegation without examination.

Four requirements

The first is transparency of criterion. Any automated system used to evaluate investment opportunities, loan applications, or financial service eligibility must be capable of disclosing to the institution deploying it, and on request to individuals affected by it, what variables the system uses, what population it was calibrated on, and what segments of the actual market it cannot evaluate. The EU AI Act of 2024 establishes under Article 13 explicit transparency requirements for high-risk systems including those used for credit evaluation, requiring that decisions be auditable and understandable by all stakeholders. The Act applies to the global providers whose systems Latin American institutions deploy. Its governance standards will migrate into those systems as a condition of continued access to the European market. Latin American regulators have the opportunity to incorporate equivalent standards before the systems requiring them are already embedded in institutional practice.

The second is disaggregated performance monitoring. Aggregate accuracy metrics certify systems as compliant while concealing systematic misclassification of specific populations. The Liu and Liang research demonstrates that models can satisfy standard fairness metrics, including disparate impact and demographic parity tests, while nonetheless producing gender-discriminatory outcomes through proxy variables that standard audits cannot detect. A governance framework relying exclusively on these metrics will certify as compliant systems that are producing discriminatory results. Brazil's AI bill, Chile's proposed AI regulation, and Peru's implementing regulations under Ley N° 31814 provide legal architecture for mandating disaggregated monitoring. What most have not yet specified is the enforcement mechanism, the institutional body responsible for reviewing disaggregated results, and the consequences for institutions whose systems demonstrate systematic disparities they have not addressed.

The third is gender-informed data strategy. Brazil's PIX instant payment system offers the clearest regional model: public digital infrastructure generating transaction records for populations previously invisible to formal financial assessment. Financial inclusion for women in Latin America has grown meaningfully in recent years, with targeted infrastructure investment demonstrating measurable movement in the indicators automated systems use to assess eligibility. The We-Fi initiative has mobilized more than $5 billion for women entrepreneurs globally through blended finance mechanisms. Similar infrastructure applied to utility payments, mobile commerce, cooperative savings records, and community credit histories would create the data substrate necessary for automated systems to evaluate the informal economy on its own terms rather than on the terms of a formal economy that the majority of Latin American women entrepreneurs do not inhabit. That expansion of data substrate must be accompanied by equivalent governance over how that data is used: collecting more information about women in order to serve them better and collecting it in order to sort them more precisely are not the same project, and governance frameworks must be explicit about which one is being pursued.

The fourth is institutional honesty about what is being delegated. An angel investment network deploying AI-assisted due diligence tools without auditing those tools for gender performance is not a gender-neutral actor that happens to produce gender-unequal outcomes. It is an institution that has delegated its criterion to a system it has not examined and made a governance choice, by default, in favor of perpetuating the historical patterns its data encodes.

What this means for investors, regulators, and founders

If you manage capital: audit every AI-assisted due diligence or scoring tool your organization uses for gender performance, not at the aggregate level, but disaggregated by the variables the model uses as proxies. If the tool cannot produce that audit, that is itself a governance finding. An instrument that cannot be examined cannot be trusted.

If you write regulation: mandate disaggregated performance monitoring for credit scoring and investment screening systems as a condition of market access. Require providers to demonstrate performance across informal-economy segments before deployment, not after harm has accumulated. The legal foundations exist in Brazil, Chile, and Peru. The enforcement infrastructure must follow.

If you are a founder seeking capital: ask every potential investor whether their evaluation tools have been tested for gender bias. The question is not impolite. It is due diligence. An investor who cannot answer it has not examined the criteria by which they are evaluating you, and that is information you need before you accept their terms.

The decision that is always available

The WBAF, as an affiliated partner of the G20 Global Partnership for Financial Inclusion, operates in a context where the delegation of investment criteria to unexamined algorithmic systems has direct consequences for the entrepreneurs who seek capital through its networks. The committee's work proceeds from the recognition that closing the gender gap in technology and governing AI responsibly are not separate agendas. Algorithmic systems that exclude women create both ethical exposure and measurable economic risk for the institutions deploying them: the risk of misallocating capital, failing to identify opportunities that existing screening tools cannot see, and building legal and reputational exposure when governance failures that were predictable and preventable become visible.

At current rates of improvement, gender parity in venture capital allocation arrives in 2065. That is not a projection about the pace of cultural change. It is a projection about the pace of institutional change in the governance of systems that are, right now, making thousands of decisions per day about which founders are worth a conversation and which are not.

The decision to govern those systems differently is available today. The decision not to govern them is also available. It is just less often described as a decision.

References

STEMpreneurs in Latin America and the Caribbean: Reducing the Gap in Access to Capital. Washington, DC: IDB Lab, July 2024. https://doi.org/10.18235/0013077

Founders Forum Group. Women in VC and Startup Funding: Statistics and Trends, 2025 Report. London: Founders Forum, 2025.

Liu, Y., and Liang, H. Are credit scores gender-neutral? Evidence of mis-calibration from alternative and traditional borrowing data. Journal of Behavioral and Experimental Finance, Vol. 47, 2025.

Obermeyer, Z., Powers, B., Vogeli, C., and Mullainathan, S. Dissecting racial bias in an algorithm used to manage the health of populations. Science, 366(6464), 447–453. October 2019.

Dastin, J. Amazon scraps secret AI recruiting tool that showed bias against women. Reuters, October 10, 2018.

Women's World Banking. Algorithmic Bias, Financial Inclusion, and Gender. New York: Women's World Banking, 2021, updated analysis 2024.

Brookings Institution. Reducing Bias in AI-Based Financial Services. Washington, DC: Brookings Institution, 2022.

World Economic Forum. Global Gender Gap Report 2024. Geneva: WEF, 2024.

World Economic Forum. Advancing Gender Parity in Entrepreneurship: Strategies for a More Equitable Future. Geneva: WEF, January 2025.

Global Entrepreneurship Monitor. GEM 2024 Women's Entrepreneurship Report. London: GEM Consortium, 2024.

Journal of Innovation and Entrepreneurship. Profile of Female Entrepreneurship in Pre-Pandemic Latin America: Trends and Challenges. Berlin: Springer Nature, December 2025.

European Parliament and Council of the European Union. Regulation (EU) 2024/1689 (Artificial Intelligence Act). Official Journal of the European Union, July 2024.

Brasil. Projeto de Lei sobre Inteligência Artificial. Brasília: Senado Federal do Brasil, approved by Senate 2024, under deliberation in Chamber of Deputies.

Perú. Ley N° 31814, Ley que establece un marco de gobernanza para la inteligencia artificial. Lima: Congreso de la República del Perú, 2023.

G20 Global Partnership for Financial Inclusion. G20 Policy Recommendations for Moving from Financial Access to Usage. Basel: GPFI, 2025. https://www.gpfi.org

World Bank. Global Findex Database 2021: Financial Inclusion, Digital Payments, and Resilience in the Age of COVID-19. Washington, DC: World Bank, 2022.

CEPAL. Panorama Social de América Latina y el Caribe 2024. Santiago: CEPAL, 2024.

International Labour Organization. Panorama Laboral 2025: América Latina y el Caribe. Geneva: ILO, December 2025.

CEPAL and Centro Nacional de Inteligencia Artificial de Chile. Latin American Artificial Intelligence Index, ILIA 2025. Santiago: CEPAL, October 2025.

World Business Angels Investment Forum. About WBAF. Istanbul: WBAF. https://wbaforum.org

About the author

Isabel Velarde is Executive Fellow in AI Governance and Responsible AI at the Digital Growth Collective, London, and President of the Gender Equality in Technology Committee at the World Business Angels Investment Forum, affiliated with the G20 Global Partnership for Financial Inclusion. She writes on AI governance, algorithmic accountability, and digital policy in Latin America.

Comments