Shadow AI and Democratic Accountability: The Governance Gap Latin American Regulation Has Not Yet Learned to See

- Isabel Velarde

- Apr 6

- 12 min read

Updated: 5 days ago

The governance debate around artificial intelligence in Latin America proceeds, in most official forums, as though the central problem were institutional: which regulatory body should oversee AI systems, how risk categories should be defined, whether to follow the EU AI Act or develop something regional from first principles. These are legitimate questions. They are not the most urgent ones.

The most urgent question is what is actually happening right now, in offices and agencies and institutions across the region, while the legislation is still being drafted.

The AI is not absent. It is simply invisible.

The Invisible System

Workers across Latin America are using artificial intelligence tools every day, without authorization, without oversight, and without any understanding on the part of their employers of what data is being processed, where it is going, or what decisions it is shaping. A loan officer in Bogotá uses an AI assistant to summarize a credit file. A human resources manager in Lima uses a generative AI platform to screen job applications. A woman whose career shows gaps from years of unpaid care work has her application filtered by a tool that reads those pauses as instability, and neither the institution nor the applicant knows a model was involved. A public school administrator in Mexico City feeds student performance data into a tool she downloaded on her phone. In none of these cases does the institution know. In none of these cases does the person affected know. And in none of these cases does the absence of a formal institutional AI system provide any protection, because the AI is not absent.

This phenomenon has a name. Shadow AI refers to the use of artificial intelligence tools within organizations without IT oversight, formal governance, or institutional accountability. In its strictest definition, shadow AI means unauthorized use. But in most Latin American institutions, there is no authorization framework at all, so the distinction collapses. The more precise description is: unregulated AI, operating at scale, inside institutions that have no visibility into what is happening and no mechanism to find out.

It is not a marginal phenomenon. According to a survey of more than 7,000 individuals conducted by CybSafe and the National Cybersecurity Alliance in late 2024, 38 percent of employees share sensitive or confidential information with AI platforms without their employer's approval. Google's Work:InProgress report, based on more than 3,500 interviews across Latin America including 767 in Mexico, identifies what it describes as a widening gap between the speed at which workers embrace AI and the capacity of organizations to support its use. Only 30 percent of companies in Mexico have clear policies on AI use. Only 31 percent encourage experimentation with corporate-approved tools.

The governance gap is not concentrated at the bottom of organizations. It runs through every level of them.

The gap between those two figures is not an administrative oversight. It is the space where consequential decisions are being made every day, by individual workers using personal AI assistants to draft reports, evaluate candidates, summarize case files, and generate assessments that then circulate through organizations as though they were the product of human judgment applied to verified information. They are not. They are the output of a model trained on data calibrated for other markets, returning results that no institution reviewed, under terms of service that no compliance officer read, on servers located in jurisdictions whose data protection laws no regulator assessed.

What institutions do not see

The populations most exposed to the consequences of shadow AI are the ones least likely to know it is happening. The International Labour Organization's 2025 Labor Panorama (the most recent data available at time of writing) documents that informality affects 46.7 percent of the employed population in Latin America and the Caribbean, and 56 percent of workers between fifteen and twenty-four years old. These are the workers whose credit files are thinnest, whose employment histories are most interrupted, whose data profiles are least likely to match the training data of systems built for other contexts.

When a loan officer uses an unauthorized AI tool to draft a credit assessment for an informal worker, the sequence is entirely invisible. The tool processes that worker's information through external servers. It generates a recommendation based on patterns learned from populations in other countries with other economic structures. It returns an output that the officer may treat as authoritative analysis. No one in that chain knows what happened: not the worker, not the bank, not the regulator. The only actor with full visibility is the AI company whose terms of service authorized the use of the data for purposes the worker never consented to and the bank never approved.

The Brookings Institution's June 2025 analysis of AI regulation in the region observes that AI systems must be designed to avoid excluding those who operate outside formal financial or employment systems by incorporating inclusive eligibility criteria and additional non-traditional data sources. That design condition presupposes that the AI systems being used are known to the institutions deploying them, subject to institutional review, and capable of being modified in response to governance requirements. Shadow AI satisfies none of these conditions. It is, by definition, invisible to the institutions that would need to impose those requirements, and it is being used at scale to inform decisions about precisely the populations for whom the design failure is most consequential.

The accountability gap

This is not only a corporate governance problem. It is a democratic accountability problem. In a constitutional democracy, the legitimacy of consequential decisions rests on a chain of accountability connecting the exercise of authority to institutions answerable to the people over whom that authority is exercised. That chain has three links: a named official who can be held responsible for a decision, a legal basis that authorizes the criterion applied, and a mechanism by which the citizen can contest the result. Shadow AI severs all three simultaneously.

In a democracy, the legitimacy of administrative decisions rests on the possibility of contestation. Shadow AI eliminates that possibility before anyone knows a decision was made.

The decision is made with AI assistance that no institution authorized, applying criteria that no institution reviewed, producing a result that the citizen has no way of knowing was AI-assisted and therefore no basis for contesting. The accountability gap does not exist in the formal structure of democratic governance. It exists beneath that structure, in the space between what institutions formally do and what the individuals within them actually do every day.

The Electronic Frontier Foundation's 2024 review of government AI use in Latin America documented that Colombia alone had 113 government automated decision-making systems in use as of 2023, a figure identified not through official government disclosure but through independent academic research mapping. This is significant: the government itself did not have a complete inventory of its own systems. This figure refers to formal systems. It says nothing about the informal AI use by individual government employees that occurs alongside and beneath those systems. Chile has developed a public algorithm repository; Brazil launched the Brazilian AI Observatory in 2024 to begin tracking state AI adoption. These are meaningful initiatives, addressing the visible portion of AI use in public institutions while the invisible portion, which by all available evidence is considerably larger, operates without any parallel transparency mechanism.

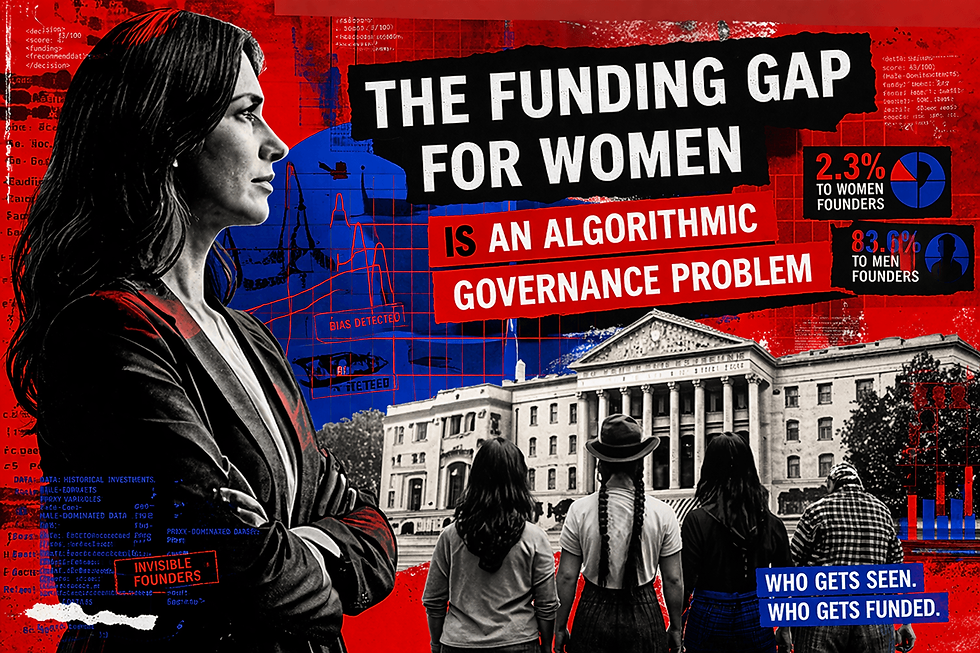

Gender as the precision instrument of exclusion

Gender is not incidental to this argument. It is the mechanism through which the structural consequences of unregulated AI are most precisely distributed. The CEPAL Social Panorama 2024 documents that the labor force participation gap between men and women in the region exceeds twenty percentage points, driven by the disproportionate concentration of unpaid care work on women. A talent screening tool used without institutional authorization by a human resources manager applies pattern recognition learned from datasets that systematically underrepresent the labor trajectories produced by caregiving responsibilities.

The tool does not know it is penalizing women with interrupted employment histories. It is doing what it was trained to do. The institution does not know the tool is being used. The woman whose application was filtered out does not know an AI tool was involved. The accountability chain that would make any of this visible and contestable does not exist, because no governance framework has been designed to reach into the space where the decision was made. This is the same mechanism documented in the 2019 Science study by Obermeyer, Powers, Vogeli, and Mullainathan on racial bias in health care algorithms: the exclusion is not in any variable. It is in the historical data the variable was trained to reflect. Shadow AI replicates that mechanism at a scale no formal system could achieve, precisely because it is invisible.

A regulatory blind spot

The regulatory landscape reflects this gap with uncomfortable precision. The Americas Quarterly review of Latin American AI governance, published in July 2025, observes that what works in a wealthy, highly digitalized country may fail in a region where informal labor is common and digital infrastructure is uneven, and that AI regulation must reflect linguistic diversity, institutional capacity, and social realities. The White and Case comparative analysis of AI legislation across the region, published in 2024, notes that while Brazil and Chile have the most developed legislative proposals, some countries have made scant progress in the legislative or regulatory process. Peru is the only country in the region to have passed an AI law at the time of writing, and its implementing regulations were still being developed. Some governments have explicitly positioned lighter regulation as a strategy to attract investment. The ILIA 2025 report, produced by CEPAL and Chile's National Center for Artificial Intelligence, finds that most countries still lack adequate financing, implementation mechanisms, and evaluation systems for the national AI strategies they have adopted.

The legislation being debated, where it is being debated, is oriented toward regulating the AI systems that institutions formally deploy. It has no mechanism for addressing the AI systems that individuals use informally within those institutions, which on current evidence constitute a considerably larger share of actual AI use in the region. This is not a criticism of the legislators drafting those frameworks. It is a description of the object they are trying to regulate and the distance between that object and the reality they are trying to govern.

The Brookings Institution's February 2025 analysis of regional AI cooperation identifies an additional structural constraint. Policies and decisions implemented by one administration may be completely disregarded by the next, and this is not uncommon in the region. The fragility of regulatory continuity means that the governance gap is not only spatial, covering what is currently unregulated, but temporal: covering what was regulated and then ceased to be.

Architecturally impressive and operationally irrelevant: a set of rules for a problem that has not yet arrived, while the problem that has already arrived continues without rules.

A different overnance problem

In my previous analysis of digital extractivism in Latin America, I focused on the structural export of data and import of decision criteria: the macro-level arrangement by which economic reality is produced in the region while the criteria used to interpret it are defined elsewhere. Shadow AI is a different but related problem. It is the micro-level version of the same failure: invisible AI use happening inside institutions, bypassing governance entirely, affecting the same populations who are already underserved by formal systems.

The practical consequences are not distributed evenly. They fall in the same pattern that structural inequality in the region has always followed. The worker with the thinnest formal record, the woman with the most interrupted employment history, the rural resident whose address does not appear correctly in a verification database, the young person whose economic activity generates no digital trace in the formal systems: these are the people whose profiles are most likely to be misread by AI tools calibrated for other contexts, and they are the people with the least capacity to identify, contest, or recover from the consequences of that misreading.

The region is not, for the most part, facing the governance problem of sophisticated automated decision systems deployed at scale by well-resourced public institutions. It is facing the governance problem of hundreds of thousands of individual workers using AI tools that no one authorized, in institutions that do not know it is happening, to make decisions that affect people who have no idea the tools exist. That is a different problem from the one most AI governance frameworks are designed to solve.

Three levels of action

Addressing shadow AI requires the same three-level institutional response that formal AI governance demands, applied to a problem that most governance frameworks have not yet been designed to see.

At the institutional level: organizations must inventory the AI tools their employees are actually using. This requires anonymous internal surveys, network traffic monitoring where legally permissible, and the creation of safe reporting channels that allow employees to disclose tool use without fear of sanction. The goal is not punishment. It is visibility. An institution cannot govern what it cannot see, and most Latin American institutions currently cannot see the AI that is shaping their decisions every day. Boards and audit committees should treat shadow AI disclosure as a governance requirement equivalent to financial controls, because the fiduciary risk of unreviewed AI-assisted decisions is now material.

At the regulatory level: governments should establish, as a condition for public contracts and operating licenses, that organizations must demonstrate they have internal AI use policies, that employees have received basic AI literacy training, and that there are accountability mechanisms for AI-assisted decisions with material consequences for individuals. This does not require comprehensive AI legislation. It requires that existing accountability frameworks, including labor law, data protection, and consumer protection, must be explicitly extended to cover decisions in which AI tools played a role, whether or not those tools were formally authorized. Civil society organizations, trade unions, and public ombudsmen have standing to press for this extension; their role in making shadow AI visible is as important as any regulatory initiative.

At the governance design level: the region needs frameworks built from the reality of how AI is actually being used, not from the regulatory models developed for institutions in jurisdictions with very different conditions. That means starting from the informal worker, the interrupted employment history, the address that does not resolve in a database, and designing accountability backward from those cases rather than forward from formal system architectures. The Americas Quarterly analysis puts it directly: the region's countries need not start from scratch, but they should not settle for copy-paste from international frameworks.

The question is not whether AI is being used

This is already happening inside your institution. Not as a future risk. Not as a scenario to plan for. Today, in decisions being made by your employees, your colleagues, your staff. The AI is there. The accountability is not.

Every compliance officer who has not yet surveyed which AI tools their employees use has already accepted the liability of not knowing. Every board that has not required shadow AI disclosure as part of its governance reporting has already made a choice about who is responsible when an AI-assisted decision produces harm, and made that choice by default. Every regulator who has not extended existing accountability frameworks to cover informal AI use has already granted an exemption to every institution operating in that gap.

The governance debate in the region has focused, understandably, on what institutions should require of AI systems. The more urgent question, given what the evidence shows about actual AI use, is what institutions should require of themselves. The question is not whether AI is being used inside your organization. It is whether anyone is accountable for how it is being used, and whether the people whose lives it is shaping have any way of knowing it exists.

References

Google. Work:InProgress: AI and the Future of Work in Latin America. Mountain View, CA: Google, 2025. Based on 3,500 interviews across Latin America including 767 in Mexico on AI adoption patterns, shadow AI use, and organizational policy gaps.

CybSafe and National Cybersecurity Alliance. Oh Behave! The Annual Cybersecurity Attitudes and Behaviors Report 2024. London and Washington, DC: CybSafe and NCA, 2024. Survey of 7,000 individuals on AI tool use and data sharing behavior without employer authorization.

Electronic Frontier Foundation. Deepening Government Use of AI and E-Government Transition in Latin America: 2024 in Review. San Francisco: EFF, December 2024. Documents 113 government automated decision-making systems mapped in Colombia as of 2023, identified through independent academic research, and reviews transparency initiatives across the region.

Brookings Institution. Smart AI Regulation Strategies for Latin American Policymakers. Washington, DC: Brookings Institution, June 2025. Analysis of AI adoption patterns, governance gaps, and regulatory recommendations calibrated to Latin American socioeconomic conditions.

Brookings Institution. Regional Cooperation Crucial for AI Safety and Governance in Latin America. Washington, DC: Brookings Institution, February 2025. Analysis of regional regulatory fragmentation, political continuity risks, and the Brussels Effect in Latin American AI legislation.

Americas Quarterly. Regulating AI on Latin America’s Terms. New York: Americas Quarterly, July 2025. Analysis of regulatory landscape and the need for region-specific governance frameworks.

White and Case. Foster Innovation or Mitigate Risk? AI Regulation in Latin America. New York: White and Case, 2024. Comparative legal analysis of AI legislative proposals across Argentina, Brazil, Chile, Colombia, Mexico, and Peru.

CEPAL and Centro Nacional de Inteligencia Artificial de Chile. Latin American Artificial Intelligence Index, ILIA 2025. Santiago: CEPAL, October 2025. Assessment of AI preparedness, adoption, and governance across 19 countries in the region.

CEPAL. Panorama Social de América Latina y el Caribe 2024. Santiago: CEPAL, 2024. Documents the labor force participation gap between men and women exceeding twenty percentage points across the region.

International Labour Organization. Panorama Laboral 2025: América Latina y el Caribe. Geneva: ILO, December 2025. Documents informality rates of 46.7% across the employed population and 56% among workers aged 15 to 24.

Obermeyer, Z., Powers, B., Vogeli, C., and Mullainathan, S. Dissecting racial bias in an algorithm used to manage the health of populations. Science, 366(6464), 447–453. October 2019.

About the author

Isabel Velarde is Executive Fellow in AI Governance and Responsible AI at the Digital Growth Collective, London, and President of the Gender Equality in Technology Committee at the World Business Angels Investment Forum, affiliated with the G20 Global Partnership for Financial Inclusion. She writes on AI governance, algorithmic accountability, and digital policy in Latin America.

%20(1)_edited.png)

Comments