Algorithmic Extractivism and Governance Accountability in Latin America

- Isabel Velarde

- Apr 7

- 10 min read

Someone in your organization approved a system that decides who gets credit, who gets hired, and who gets access to public services in this region. Almost certainly, no one asked where that system was trained, on whose data, and for which market. Almost certainly, no one knows what it cannot see. That is not a technology problem. It is a governance failure, and it is happening at scale, today, inside institutions that would never accept equivalent opacity in a financial audit.

The algorithm was not designed for this market. It was designed for a different one, exported as a service, and adopted without examination.

Position determines everything. Raúl Prebisch was not a revolutionary. He was a central banker who had seen the numbers. In 1950, presenting before the United Nations Economic Commission for Latin America with the restraint of a technician, he identified a structural regularity that prevailing theory was not prepared to confront: the terms of trade between the industrialized center and the exporting periphery did not converge over time. They diverged. The center designed, standardized, and captured margins. The periphery supplied raw materials and received a fraction of the value generated. Position within a value chain, Prebisch understood, determines everything.

Seventy-five years later, the commodity has changed. The structure has not.

The new commodity: data. Every credit inquiry, every job application submitted through a digital portal, every search from a smartphone in São Paulo or Lima or Bogotá produces information that flows into processing infrastructures located primarily in the United States, Europe, and increasingly China, in jurisdictions that bear no legal or political responsibility toward the individuals whose behavior generated that data. It returns, transformed, as a decision. What appears as a sequence of technical operations is, in fact, the institutionalization of a separation: economic reality is produced in Latin America; the criteria used to interpret it are defined elsewhere.

The raw material leaves. The verdict returns.

Call it digital extractivism. Data is the raw material. The model is the manufactured product. The margin is captured where processing takes place, almost always outside the region. China is not only a processor in this arrangement: it refines critical minerals and simultaneously exports AI models to the region through platforms developed by Alibaba, Tencent, and ByteDance. The center has multiplied. The structure has not changed.

Economic dark matter

These models were built on data from economies where formal employment is the rule and financial histories are dense. When deployed in a region where, according to the International Labour Organization’s 2025 Labor Panorama (the most recent data available at time of writing), 46.7 percent of employment is informal, rising to 56 percent among young people, they do not merely struggle to assess the informal economy. They structurally exclude it before any analysis takes place. This is not a performance issue. It is a design limitation.

The result is not that informal workers are classified as high risk. They are not classified at all. They fall outside the system’s field of vision. There is no record of that non-evaluation, no possibility of appeal against a decision that was never formally issued. There is only absence, and that absence, because it generates no signal, is interpreted as confirmation of the original design. Invisibility becomes self-reinforcing.

This is Latin America’s economic dark matter: productive activity that exists in reality but has no representation in the digital maps automated systems use to make decisions. The merchant who operates in cash with a flawless local reputation is invisible to a model that requires formal deposits. The professional whose career shows interruptions for care work is penalized by systems that read those pauses as instability. The asset owner whose property lacks formal registration appears nonexistent in valuation models. Economic value exists. What does not exist is its translation into the format that the system recognizes as valid evidence.

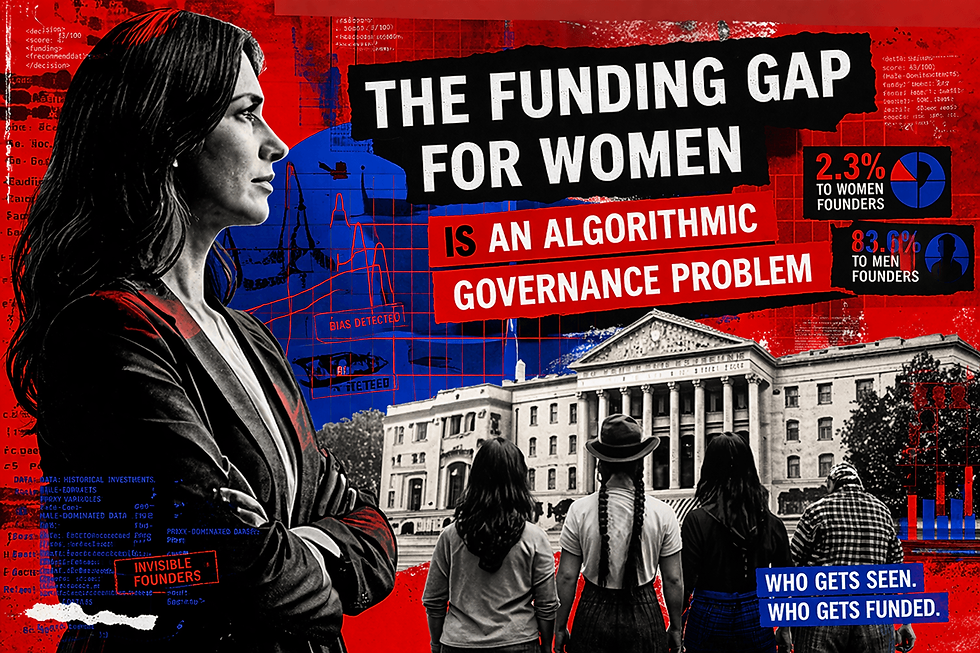

Gender as a structural mechanism

Gender is the lens through which this exclusion is most precisely reproduced. The World Bank’s Global Findex Database documents that women in Latin America remain significantly underrepresented in formal financial systems, a gap not explained by income or education but by structural factors that automated systems, calibrated on data from other markets, systematically misread as risk indicators. CEPAL’s Social Panorama 2024 documents a labor force participation gap between men and women exceeding twenty percentage points, driven by the concentration of unpaid care work on women. A talent-screening system trained on historical hiring data from an industry where women have been systematically underrepresented does not discriminate in any legally legible sense. It learns that advancement profiles are profiles women have historically been prevented from building, and replicates that learning at scale.

The 2018 Amazon case is the most documented instance of this mechanism. According to Reuters reporting, a model trained on ten years of the company’s own hiring data had learned to penalize signals associated with female candidates, not through any explicit variable, but through the historical data it had been trained to replicate. A study published in Science in 2019 by Obermeyer, Powers, Vogeli, and Mullainathan documented the same mechanism in health care: an algorithm managing care for millions of US patients systematically underestimated the needs of Black patients because it used historical cost as a proxy for health need, without accounting for the barriers that had historically constrained their access to care. The exclusion was not in the variable. It was in the data the variable reflected.

The bias is not in the code. It is in the history the code was trained to repeat.

A question the room was not prepared to process

In July 2019, at the Inter-American Development Bank’s Made in the Americas Global Digital Services Summit in Buenos Aires, a Chilean executive interrupted a conversation about credit scoring platforms with a question the room was not prepared to process. Have you noticed, she said, that our evaluation systems are biased? She was not referring to explicit discrimination but to something structurally deeper: algorithms designed in Silicon Valley, calibrated for markets of dense formal employment, applied uniformly to economies where nearly half the labor force operates outside the formal system. When providers were confronted with the exclusions their systems produced, the response was consistent: the model reflects the reality of the market. It was not the Latin American market. What was presented as neutrality was the projection of an external standard onto a structurally different context.

That conversation was not exceptional. The algorithm arrives packaged from external providers, carrying embedded assumptions about creditworthiness, employability, and eligibility, defined, tested, and optimized elsewhere. The institutions that implement them, almost without exception, never ask what those assumptions are. They ask how much the system costs, how quickly it can be deployed, and what return it will generate. The criteria, the very definition of who is included and who is excluded, arrive by default.

Accelerated consumption, minimal governance

The Latin American Artificial Intelligence Index, ILIA 2025, published by CEPAL and Chile’s National Center for Artificial Intelligence, documents the imbalance with precision. Latin America represents 6.6 percent of global GDP but receives only 1.12 percent of global AI investment, six times less than the global average relative to GDP per capita. Yet the region accounts for 14 percent of global visits to AI solutions and ranks third worldwide in downloads of generative AI applications. Accelerated consumption. Minimal production. No proportional governance.

Prebisch called it the deterioration of the terms of trade. Today, Latin America faces the deterioration of the terms of decision. The region supplies the raw material of the digital economy and receives, packaged as innovation and priced as a service, the criteria that govern its own participation in the market. What is being exchanged is no longer goods. It is judgment itself.

The physical infrastructure of this ecosystem makes the double extraction concrete. According to UNCTAD’s Digital Economy Report 2024, data centers consumed 460 terawatt-hours of electricity in 2022, equivalent to France’s total consumption, projected to exceed 1,000 terawatt-hours by 2026. Latin America supplies not only data but the minerals that make the hardware possible: Chile and Australia account for 72 percent of global lithium; the Democratic Republic of the Congo produces 74 percent of global cobalt; China refines more than half of both, along with a dominant share of rare earth elements essential to semiconductor manufacturing. The World Bank projects a 500 percent increase in demand for these materials by 2050. The region extracts the material foundation of the digital economy, then pays recurring license fees to access the intelligence built on that substrate. Mineral contribution. Digital contribution. Surplus captured elsewhere.

Delegation without oversight

The governance gap is institutional, not informational. The default response, calling for more and better data to capture informal economic activity, misdiagnoses the problem. The deficit is not in the data. It is in the accountability structures that transform data into decisions. Boards of directors across the region approve budgets, vendors, and implementation timelines. They rarely approve criteria. The definition of who is included and who is excluded arrives embedded in imported systems and, once deployed, operates continuously and at scale without any deliberative body examining the values encoded or the populations made invisible.

The absence of an explicit decision does not imply neutrality. It implies delegation without oversight, and delegation without oversight is a fiduciary failure.

Three levels of action

The response is not autarky. Latin America neither needs nor can build the entire AI stack internally. The response is to change position within the value chain, and in algorithmic governance, that means building institutional capacity to audit, condition, and when necessary refuse the criteria embedded in systems before they are deployed.

At the board and institutional level: before deploying any automated system with material impact on access to credit, employment, or public services, institutions must commission independent algorithmic audits establishing which populations the system cannot evaluate, what proxy variables it uses and what they actually measure, and who bears accountability when errors produce harm. This is not a technical exercise. It is a governance obligation equivalent in character to financial due diligence, and it should be treated as such by audit committees.

At the regulatory level: governments should require, as a condition of market access, that providers of high-impact automated systems disclose training data provenance, demonstrate performance across informal-economy segments, and establish local accountability mechanisms. Brazil’s Lei Geral de Proteção de Dados already establishes the right to review decisions taken solely on the basis of automated processing. Colombia’s framework on financial and credit information requires lenders to demonstrate they considered factors beyond automated scoring outputs. The legal foundations exist. Enforcement infrastructure must follow.

At the data infrastructure level: the region must invest in building representative datasets that reflect its own economic reality, including informal labor, cash-based commerce, and non-registered assets, so that the next generation of models can be trained on evidence that corresponds to the populations they will govern. Brazil’s Nubank has demonstrated that local adaptation is possible: by incorporating alternative data such as utility payments and rental history, it has extended credit access to segments that traditional scoring systems rendered invisible, though not without raising privacy questions that deserve equivalent governance attention. Scaling this approach requires public investment, inter-institutional coordination, and the recognition that data infrastructure is as strategic as physical infrastructure.

Regulation is beginning to move

The European Union’s Artificial Intelligence Act, published in July 2024 and in force since August 1, 2024, classifies credit evaluation, employment decisions, and access to essential services as high-risk applications subject to mandatory conformity assessments, human oversight, and the right of affected individuals to be informed that an automated system evaluated them. Full enforcement obligations for most high-risk systems apply from August 2026. The Act does not apply directly to Latin American jurisdictions, but it does apply to the external providers whose systems the region deploys, and its governance conditions will migrate as a condition of continued access to the European market. The direction of regulatory convergence is unambiguous. The pace remains slower than the adoption of the systems it seeks to govern.

The time to act is before

Prebisch’s question was about who captures the surplus generated between center and periphery. The question today is about judgment: who defines it, who applies it, and who answers for its consequences. The algorithm deciding who gets credit in Lima or who gets hired in Bogotá is not a neutral instrument. It is a political choice, embedded in systems developed outside the region, exported as a service, and governed, in most cases, by no one within it.

Every board member who approved a vendor contract without reviewing the system’s evaluation criteria has already made a choice. They simply made it without knowing it. Every regulator who has not yet required disclosure of training data provenance has already granted an exemption, by default. Every institution that deployed an automated decision system without auditing its exclusions has already decided who is invisible in this market. The question is no longer whether these decisions are being made. It is whether the people responsible for them are prepared to answer for what they have put in place.

Prebisch looked at the numbers and refused to mistake a structural arrangement for a natural law. That is what Latin American institutions, public and private alike, are now required to do. The configuration he described took decades to name. This one is being named now. The time to act is not after it becomes entrenched. It is before.

References

CEPAL and Centro Nacional de Inteligencia Artificial de Chile (CENIA). Latin American Artificial Intelligence Index, ILIA 2025. Santiago: CEPAL, October 2025.

International Labour Organization. Panorama Laboral 2025: América Latina y el Caribe. Geneva: ILO, December 2025.

United Nations Conference on Trade and Development. Digital Economy Report 2024: Shaping an Environmentally Sustainable and Inclusive Digital Future. Geneva: UNCTAD, July 2024.

World Bank. Global Findex Database 2021: Financial Inclusion, Digital Payments, and Resilience in the Age of COVID-19. Washington, DC: World Bank, 2022.

CEPAL. Panorama Social de América Latina y el Caribe 2024. Santiago: CEPAL, 2024.

Obermeyer, Z., Powers, B., Vogeli, C., and Mullainathan, S. Dissecting racial bias in an algorithm used to manage the health of populations. Science, 366(6464), 447–453. October 2019.

Dastin, J. Amazon scraps secret AI recruiting tool that showed bias against women. Reuters, October 10, 2018.

European Parliament and Council of the European Union. Regulation (EU) 2024/1689 (Artificial Intelligence Act). Official Journal of the European Union, July 12, 2024. In force August 1, 2024; high-risk system obligations applicable from August 2026.

Brasil. Lei nº 13.709/2018 (Lei Geral de Proteção de Dados Pessoais, LGPD). Brasília, 2018.

Colombia. Ley 2157 de 2021, que modifica y adiciona la Ley 1266 de 2008. Bogotá, 2021.

Prebisch, R. The Economic Development of Latin America and its Principal Problems. New York: United Nations Department of Economic Affairs, 1950.

About the author

Isabel Velarde is Executive Fellow in AI Governance and Responsible AI at the Digital Growth Collective, London, and President of the Gender Equality in Technology Committee at the World Business Angels Investment Forum, affiliated with the G20 Global Partnership for Financial Inclusion. She writes on AI governance, algorithmic accountability, and digital policy in Latin America.

%20(1)_edited.png)

Comments